Table of Contents

- 1 Making a God of Nothing:

- 2 Summary of the Something from Nothing Argument:

- 3 Problem: The “Nothing” is Actually Something:

- 4 The Philosophy Behind Atheistic “Science”:

- 5 The Signature of God:

- 6 Summary:

Making a God of Nothing:

Making a God of Nothing:

Modern atheistic scientists, like Stephen Hawking, Lawrence Krauss, Richard Dawkins and the like are claiming that pretty much everything ultimately came from essentially nothing – and that is why the notion of an eternal omnipotent God is no longer needed to explain anything that exists – to include the origin of all living things as well as the origin of the entire universe. So, where did it all come from? Well, it all came from nothing – literally!

While this is admittedly quite difficult to wrap one’s mind around, even for these atheistic scientists themselves, they argue that regardless of its apparent conflict with common sense, that the science behind the idea of something coming from nothing, even a something the size of the entire universe, is so compelling that common sense can safely be ignored in this case.

Alexander Vilenkin:

Alexander Vilenkin:

One of the first to propose this idea was the noted cosmologist Alexander Vilenkin who argued in an 1982 paper “Creation of Universes from Nothing,” that our universe might have arisen via a “quantum fluctuation.”

Stephen Hawking:

This was followed, in 2010, by Stephen Hawking, perhaps the most well known theoretical physicist alive today, who also argued in his book, “The Grand Design“, that the universe came into existence “from nothing.”

“Because there is a law such as gravity, the universe can and will create itself from nothing. Spontaneous creation is the reason there is something rather than nothing, why the universe exists, why we exist. It is not necessary to invoke God to light the blue touch paper and set the universe going….

According to M-theory [a unification of multiple string theories], ours is not the only universe. Instead, M-theory predicts that a great many universes were created out of nothing. Their creation does not require the intervention of some supernatural being or god. Rather, these multiple universes arise naturally from physical law.”

Lawrence Krauss:

Then, in January of 2012 Lawrence Krauss published a book entitled, “A Universe from Nothing: Why There Is Something Rather than Nothing.” This book was basically taken from a transcript of a very successful, very interesting, and even entertaining lecture that Krauss had presented earlier – which I highly recommend watching (YouTube video of that lecture – Link). Now, Krauss is no slouch. He is a well-known American theoretical physicist and cosmologist who is Foundation Professor of the School of Earth and Space Exploration at Arizona State University. So, he should know at least something as to what he’s talking about here – right? It is no surprise, then, that Krauss largely agrees with Hawking:

“If we are all stardust, as I have written, it is also true, if inflation happened, that we all, literally, emerged from quantum nothingness…

It certainly seems sensible to imagine that a priori, matter cannot spontaneously arise from empty space, so that something, in this sense, cannot arise from nothing. But when we allow for the dynamics of gravity and quantum mechanics, we find that this commonsense notion is no longer true. This is the beauty of science, and it should not be threatening. Science simply forces us to revise what is sensible to accommodate the universe, rather than vice versa.”

During a subsequent interview Krauss clarified:

“We don’t know how something can come from nothing, but we do know some plausible ways that it might. That it’s possible to create particles from no particles is remarkable—that you can do that with impunity, without violating the conservation of energy and all that, is a remarkable thing. The fact that “nothing,” namely empty space, is unstable is amazing. But I’ll be the first to say that empty space as I’m describing it isn’t necessarily nothing. Although I will add that it was plenty good enough for Augustine and the people who wrote the Bible. For them an eternal empty void was the definition of nothing, and certainly I show that that kind of nothing ain’t nothing anymore.” (Link)

Richard Dawkins:

Richard Dawkins:

Of course, Richard Dawkins didn’t get that last part from Krauss’s book – the part about “empty space” not really being comprised of “nothing”. Instead, Dawkins evidently thought that Krauss (and Hawking) had actually explained the origin of everything starting with absolutely nothing. He was therefore delighted with both of the books from Hawking and Krauss since he saw these books as supporting his own atheistic philosophy – which only stands to reason since both Hawking and Krauss are also atheists for similar reasons. Dawkins even wrote the afterword to Krauss’s book in which he boasts that Krauss did for physics what Charles Darwin did for biology – kicked the last vestiges of God right out of the equation:

“Even the last remaining trump card of the theologian, ‘Why is there something rather than nothing?,’ shrivels up before your eyes as you read these pages. If On the Origin of Species was biology’s deadliest blow to supernaturalism, we may come to see A Universe From Nothing as the equivalent from cosmology.” – Richard Dawkins (Link)

To be fair, however, Krauss himself called Dawkins’ comparison between his book and Darwin’s a bit “pretentious”, but still decided to include it in the final published book, probably for marketing reasons:

“Richard Dawkins wrote the afterword for the book—and I thought it was pretentious at the time, but I just decided to go with it—where he compares the book to The Origin of Species. And of course as a scientific work it doesn’t come close to The Origin of Species, which is one of the greatest scientific works ever produced. And I say that as a physicist; I’ve often argued that Darwin was a greater scientist than Einstein. But there is one similarity between my book and Darwin’s—before Darwin life was a miracle; every aspect of life was a miracle, every species was designed, etc. And then what Darwin showed was that simple laws could, in principle, plausibly explain the incredible diversity of life. And while we don’t yet know the ultimate origin of life, for most people it’s plausible that at some point chemistry became biology. What’s amazing to me is that we’re now at a point where we can plausibly argue that a universe full of stuff came from a very simple beginning, the simplest of all beginnings: nothing. That’s been driven by profound revolutions in our understanding of the universe, and that seemed to me to be something worth celebrating, and so what I wanted to do was use this question to get people to face this remarkable universe that we live in.” (Link) – Lawrence Krauss

Of course, Krauss is right when he points out that the whole idea of something coming from “nothing” goes very much contrary to “common sense”. After all, no one has ever seen that happen before. No one has seen a coffee cup, much less an entire universe, just pop into existence out of nothing – not even the something of empty space. So how, exactly, is the entire universe supposed to have come from “nothing”?

Summary of the Something from Nothing Argument:

Well, to make a long story short, the idea is based on theories of quantum mechanics and string theories which suggest that space and time are made of a kind of underlying fabric which in turn can sustain particles – which form the building blocks of atoms. When there are no particles in a particular region of space, that region is described as a “vacuum” in space. Now, to create a particle in the vacuum of space one must “excite” the underlying quantum “field” of space-time to produce a kind of “ripple” in the pond – so to speak. And, this “excitement” of the underlying field produces a particle at that location. Different fields have different names and physical features. There is an electron field, a photon field, a Higgs field, among many many others. Ripples in these fields are experienced by us as particles.

Again, one can think of a field like the surface of a pond. A smooth surface corresponds to the vacuum of “empty” space, where there are no particles. However, if a stone or pebble is tossed into the pond, waves or “fluctuations” are formed.

In short, you can have a pond without ripples, but not ripples without a pond. In the same way, there can be fields without particles, but not particles without fields.

Zero Sum Energy Universe:

However, isn’t the creation of the very ripples on a pond equivalent to the creation of energy? If so, doesn’t this violate the Law of the Conservation of Energy? – a basic law of physics that states that the energy of a closed system cannot be created or destroyed (Link)? Physicists get around this little problem for the formation of the universe by showing that the total energy of the universe is actually zero. That’s right – zero total energy within the entire universe. Michio Kaku (professor of theoretical physics at the City College of New York and CUNY Graduate Center) explains this seeming paradox:

Problem: The “Nothing” is Actually Something:

Now, random fluctuations in a field can give rise to particles. There seems to be good scientific evidence for this that has been known and repeatedly demonstrated now for many decades. But, is this really the creation of something from “Nothing”? Quite clearly, the answer is: absolutely not! In order to produce waves in the pond the pond itself must first be there. The same is true for particles. Before matter can exist, before subatomic particles can exist, the underlying fabric of space must first exist. Without the pre-existence of this fabric of space and its fields, nothing would be able to “fluctuate” and produce particles. Beyond this, the underlying laws of physics, the “fundamental constants” that govern these things must also pre-exist the formation of particles.

“The salient point has to do with how quantum mechanics works. In quantum mechanics one always considers some physical “system”, which has various possible “quantum states”, and which is governed by certain well-defined “dynamical laws.” These dynamical laws that govern the particular system and the fundamental principles of quantum mechanics allow one to calculate the probability that the system will make a transition from one of its states to another. To take a simple example, the system might be an atom of hydrogen, and its states would be the different “energy levels” of the atom…

These laws would govern not only how many universes there were or could be, but the characteristics of these universes, such as how many dimensions of space they could have and what kinds of matter and forces they could contain.” (Link)

- Stephen M. Barr, a physicist at the University of Delaware in his 2010 review of Hawking’s ideas in “Much Ado About “Nothing“.

Unfortunately, Krauss (and Hawking) fell into the same trap of equating a vacuum with nothing – both giving the strong impression in their respective books that something was really nothing just because the something, “dark energy” in this case, is invisible and non-physical. In March of 2012, David Albert (philosopher and theoretical physicists from Columbia University) wrote a harsh but many think a fair rebuke of Krauss’s book in his New York Times review (Link):

“Pale, small, silly, nerdy accusation that religion is, I don’t know, dumb…

Relativistic quantum fields… have nothing whatsoever to say on the subject of where those fields came from, or of why the world should have consisted of the particular kinds of fields it does, or of why it should have consisted of fields at all, or of why there should have been a world in the first place. Period. Case closed. End of story.”

Relativistic quantum field theoretical vacuum states — no less than giraffes or refrigerators or solar systems — are particular arrangements of elementary physical stuff. The true relativistic quantum field theoretical equivalent to there not being any physical stuff at all isn’t this or that particular arrangement of the fields — what it is (obviously, and ineluctably, and on the contrary) is the simple absence of the fields! The fact that some arrangements of fields happen to correspond to the existence of particles and some don’t is not a whit more mysterious than the fact that some of the possible arrangements of my fingers happen to correspond to the existence of a fist and some don’t. And the fact that particles can pop in and out of existence, over time, as those fields rearrange themselves, is not a whit more mysterious than the fact that fists can pop in and out of existence, over time, as my fingers rearrange themselves. And none of these poppings — if you look at them aright — amount to anything even remotely in the neighborhood of a creation from nothing.”

The Philosophy Behind Atheistic “Science”:

Of course, in subsequent interviews about his book Krauss did admit that he wasn’t exactly starting with absolutely nothing – that he was starting with quite a bit actually. So, why did he suggest such a thing in the very title of his book?

If I’d just titled the book “A Marvelous Universe,” not as many people would have been attracted to it (Link). – Krauss

Krauss knew what he was doing and he took full advantage of the marketing potential of the title he chose as well as the implication that God was most likely no longer needed in physics – that ultimately the only something that is eternal is the inflationary universe that gives rise to the “multiverse” or an infinite number of other universes that are each different from our own and from each other (Link). So, while something exists and while that something is eternal and extremely powerful and creative, it isn’t God and God is not required to explain any of it – according to Krauss.

“If the multiverse really exists, then you could have an infinite object—infinite in time and space as opposed to our universe, which is finite. That may beg the question as to where the multiverse came from, but if it’s infinite, it’s infinite. You might not be able to answer that final question, and I try to be honest about that in the book. But if you can show how a set of physical mechanisms can bring about our universe, that itself is an amazing thing and it’s worth celebrating. I don’t ever claim to resolve that infinite regress of why-why-why-why-why; as far as I’m concerned it’s turtles all the way down. The multiverse could explain it by being eternal, in the same way that God explains it by being eternal, but there’s a huge difference: the multiverse is well motivated and God is just an invention of lazy minds.” – Krauss, 2012 Interview (Link)

In his book Krauss adds:

“The universe is the way it is , whether we like it or not. The existence or nonexistence of a creator is independent of our desires. A world without God or purpose may seem harsh or pointless, but that alone doesn’t require God to actually exist.” ― Krauss, A Universe from Nothing

George Ellis (physicist, mathematician, and cosmologist at the University of Cape Town) also commented on Krauss’s book, describing A Universe From Nothing as a “kind of attempt at philosophy.” (Link):

[Krauss] is presenting untested speculative theories of how things came into existence out of a pre-existing complex of entities, including variational principles, quantum field theory, specific symmetry groups, a bubbling vacuum, all the components of the standard model of particle physics, and so on. He does not explain in what way these entities could have pre-existed the coming into being of the universe, why they should have existed at all, or why they should have had the form they did. And he gives no experimental or observational process whereby we could test these vivid speculations of the supposed universe-generation mechanism. How indeed can you test what existed before the universe existed? You can’t.

Thus what he is presenting is not tested science. It’s a philosophical speculation, which he apparently believes is so compelling he does not have to give any specification of evidence that would confirm it is true. Well, you can’t get any evidence about what existed before space and time came into being. Above all he believes that these mathematically based speculations solve thousand year old philosophical conundrums, without seriously engaging those philosophical issues. The belief that all of reality can be fully comprehended in terms of physics and the equations of physics is a fantasy. As pointed out so well by Eddington in his Gifford lectures, they are partial and incomplete representations of physical, biological, psychological, and social reality.

And above all Krauss does not address why the laws of physics exist, why they have the form they have, or in what kind of manifestation they existed before the universe existed (which he must believe if he believes they brought the universe into existence). Who or what dreamt up symmetry principles, Lagrangians, specific symmetry groups, gauge theories, and so on? He does not begin to answer these questions. It’s very ironic when he says philosophy is bunk and then himself engages in this kind of attempt at philosophy.” – George Ellis (Link)

The Signature of God:

Even though Krauss and Hawking and others atheistic scientists like them have yet to explain the origin of many things that are required for our universe to exist, their argument is based on the idea that all these required things already have an eternal existence of their own – and that is why there is no need to invoke God to explain any of it.

So, does that mean that God has in fact been kicked out of the equation? that there really is no signature anywhere in our universe of a God or even God-like hand in the origin of anything?

Well, I would suggest to Hawking, Krauss, Dawkins, and those “new atheists” like them that God’s signature can be found throughout nature, from the fundamental constants of the universe to the origin of the tiniest living thing on this planet. It is just that the philosophy of these atheistic scientists blinds them to the very strong scientific/empirical evidence that literally screams design.

Starting Entropy of the Universe:

The Second Law of Thermodynamics:

The Second Law of Thermodynamics:

One of the most well supported and definitive laws of science is the law of entropy – otherwise known as the Second Law of Thermodynamics (2LoT). What the 2LoT basically says is that energy always flows from areas of higher concentration to areas of lower concentration within a closed system until equilibrium is reached. At this point, there is no longer any further flow of energy within the system that can be harnessed to do “useful work”. This means, of course, that perpetual motion machines are impossible because once the energy of a system reaches maximum entropy, the homogenous diffusion of the energy of the system will no longer drive any additional preferential motion within that system. This law is considered so solid and so well tested that if any other hypothesis or theory challenges this law, the challenger will always prove to be in error.

The law that entropy always holds, I think, the supreme position among the laws of Nature. If someone points out to you that your pet theory of the universe is in disagreement with Maxwell’s equations — then so much the worse for Maxwell’s equations. If it is found to be contradicted by observation — well, these experimentalists do bungle things sometimes. But if your theory is found to be against the second law of thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation. — Sir Arthur Stanley Eddington, The Nature of the Physical World (1927)

Origin of the Universe’s Low Entropy:

So, what does entropy have to do with the arguments presented by Krauss and Hawking regarding the origin of the universe? Well, let’s say that Michio Kaku is right – that the total energy of the universe is zero and that therefore universes can be generated for “free” and that we can actually have a truly “free lunch”. While this would avoid a violation of the First Law of Thermodynamics (a version of the Law of Conservation of Energy), it wouldn’t escape a seeming violation of the Second Law of Thermodynamics. How is that? Well, while one might argue for a zero sum energy within a universe from the very beginning of that universe, the problem is that it is very hard to rationally explain very low entropy levels at the start of a universe that are very close to zero as well. After all, according to Sir Roger Penrose, the degree of precision of the original entropy of the universe had to be accurate to within one part in 1010^123 (Penrose, 1989, The Emperor’s New Mind, pp 339-345; Link).

That’s one part in 1 followed by 10123 zeros! – a number impossible to even write down in normal notation since there are only around 1080 atoms in the entire universe. If you could write a zero on each one of them there wouldn’t be enough atoms to write down the number. In other words, the original entropy of our universe was extremely extremely low – unimaginably so. Clearly then, the Big Bang was an extremely special kind of explosion – much more special, precise, guided and fine-tuned than an “explosion” that would be able to create a Boeing 747 in a junkyard.

What then is it that maximizes the thermodynamics of a closed system so that it can produce any degree of “useful work”? – a situation where the energy within a system is not completely “homogenized” (or completely clumped up in the case of gravitationally-attracted bodies), but is highly structured to allow for a flow of energy (a whole lot of it) within the closed system?

Physicists simply have no apparent explanation for the origin of this very-low entropic state of the universe from natural law. And yet, this very low entropic state is required in order for the universe to be anything other than unusable energy – incapable of doing much of anything. There simply is no known physical means of reducing the direction of the entropy of a system. Once the entropy of a closed system reaches is maximum value, it cannot be reduced below that maximum value from within itself. It’s like trying to pull yourself up by your own bootstraps. It just doesn’t work.

For example, what happens to the entropy of a closed system, like a box, filled with gas molecules that have already reached homogeneous distribution within the box if the size of the box is reduced? Nothing. The heat of the system is increased, but the entropy of the system remains the same as it was before – maximized. What happens to the entropy if the size of the box is then very gradually increased? Nothing. The heat level decreases, but the entropy of the closed system remains the same – maximized. The only way to reduce the entropy of this system below the maximum level is to act on it from outside of the box. The idea that universes can potentially collapse back on themselves into a singularity and then explode yet again in another “Big Bang” wouldn’t remotely solve this problem of reversing a completely homogenized system into a non-homogenized system from within itself. Once entropy is maximized, that’s it. There is no known way to reverse the clock or the direction of time.

A Much Smaller Universe?

However, just for argument’s sake, let’s just assume that somehow someway the “Big Bang” was able to create a universe with a low level of starting entropy. What are the odds that a universe the size of ours would be produced? The odds would be one chance in 1 followed by 10123 zeros! It is then extraordinarily unlikely that a universe of our size and entropic level would have been produced. The odds strongly favor a much much smaller universe – even given that low levels of entropy could be produced at all without outside direction and input.

On the other hand, given an infinite number of universes, anything is theoretically possible – which itself presents a huge problem for the rational basis of science itself. If anything goes, if anything can and will happen, if statistical odds don’t matter, where is the rational basis for science?

Inflation:

Many of the modern explanations for a low-entropy arrow of time involve a theory called “Inflation” – the idea that a strange burst of anti-gravity ballooned the primordial universe to an astronomically larger size, smoothing it out into what corresponds to a very low-entropy state from which subsequent cosmic structures could emerge. But explaining inflation itself seems to require even more fine-tuning… which many naturalistic physicists don’t like. Inflation also seems to require an infinite number of other universes, which are used to explain the required fine-tuned features of our universe. “The problem is that this wreaks havoc on probability judgments. If your sample space is infinite, it does not appear possible to have a well-defined probability measure to underwrite your probability and likelihood judgments. This problem of infinities and probabilities in eternal inflation-based cosmologies is well-known. However, it is also well known that there is no current satisfactory solution to the problem.” (Weaver, 2013)

In other words, inflationary models end up undermining science itself because it removes the basis of rational likelihood judgments. “It doesn’t make any sense to say what inflation predicts, except to say it predicts everything” (Steinhardt, 2014).

Indeed, inflation, like string theory, has always suffered from what is sometimes called the “Alice’s Restaurant Problem.” Like the diner eulogized in the iconic Arlo Guthrie song, inflation comes in so many different versions that it can give you “anything you want.” In other words, it cannot be falsified, and so… it is not really a scientific theory.

.

John Horgan, 2104 – science journalist and Director of the Center for Science Writings at Stevens Institute of Technology

Eternal Inflation = No Maximum Entropy:

Yet another inflation proposal assumes that the universe has an unlimited capacity for entropy (i.e., there is no “maximum” level of entropy within the universe). According to this view,

“If we assume there is no maximum possible entropy for the universe, then any state can be a state of low entropy. That may sound dumb, but I think it really works, and I also think it’s the secret of the Barbour et al construction. If there’s no limit to how big the entropy can get, then you can start anywhere, and from that starting point you’d expect entropy to rise as the system moves to explore larger and larger regions of phase space. Eternal inflation is a natural context in which to invoke this idea, since it looks like the maximum possible entropy is unlimited in an eternally inflating universe.”

Alan Guth, 2104, cosmologist who helped pioneer the theory of inflation, Massachusetts Institute of Technology, (Link)

So, it really doesn’t matter what the level of entropy was at the beginning of the universe. Since there is no maximum level of entropy, there will always be a relatively “low level” of entropy forevermore compared with the eternally increasing levels of future entropy – which is yet another a kind of “free lunch” since it is proposed as a way to get unlimited usable energy for free.

While this option sounds attractive, it still doesn’t explain the extraordinarily low levels of entropy required by our universe at its beginning – in order for our universe to have such a low level of entropy today. Another problem is that a finite amount of energy spread out over an eternally expanding universe, or in infinite degrees of freedom, will eventually become too thinned out to be “useful”. In other words, a finite amount of energy spread out over an effectively infinite “space” is essentially equivalent to no energy. Where does the inflation model get the extra energy as the universe expands toward infinity? No one knows and a “heat death”, where no further “useful work” can be done, seems inevitable. The time for all ordinary matter to disappear has been calculated to be 1040 years from now. Beyond this, only black holes will remain. And even they will evaporate away after some 10100 years. (Link, Link)

Consider also that “entropy is unbounded only if there are infinitely many degrees of freedom” within the space-time of our universe. Some believe in infinitely dimensional space (Herbert Space). However, the degrees of freedom are in fact finite and “bounded”. That means, of course, that entropy can and will eventually be maximized in our universe (Link). So, the question of the origin of the very low entropy of the universe remains…

But, what other option is there?

Eternal Universe:

Eternal Universe:

Of course, there have been various other solutions proposed such as an infinite universe with random local reductions in entropy. For example, in the late 1800s Ludwig Boltzmann (an Austrian physicist and philosopher who first described the fundamental concepts of entropy in his kinetic theory of gases), believed that the universe was eternal, without beginning or end. Given such an infinity of time, anything finite can and will happen an infinite number of times. Given this perspective, fluctuations are statistically bound to happen every now and then. Boltzmann mused that we might live in such an improbable period of time, created by a random fluctuation in the eternal universe, with an arrow of time currently set by a long, slow slide back towards equilibrium.

Again, however, the idea of an infinite universe explaining everything doesn’t really explain anything and undermines the very basis of science itself.

Entropy Reduced by Gravity:

Another idea is that gravity can reduce entropy. How is that? Well, imagine a gas of massive particles, distributed randomly in space. In the absence of other forces, gravity pulls the particles together. As they get closer and closer together, the gas seems to become more “ordered” or, rather, less dispersed, and therefore it would seem that overall entropy should decrease.

One problem, however, is that entropy is really a measure of an ability to extract “useful work” from within a closed system. As particles come together via gravity, their relative motion could be harnessed to do useful work for a while. However, eventually the particles would start to coalesce with each other and the ability to extract further “useful work” from their relative motion would decrease and eventually end – equivalent to a state of “maximum entropy” where no further “useful work” can be produced by the closed system.

On top of this problem consider also that there is gravitational potential energy between the particles in the gas, and when they get closer together, this potential energy decreases. Since energy is conserved, it must be converted to heat. In other words, as the particles get closer together, the gas gets hotter. And, this extra heat is eventually radiated into space and away from the condensing gas cloud. So, again, the overall entropy of the universe still increases toward maximum (Link, Link). It seems like there’s simply no escaping the 2LoT…

No Reasonable Naturalistic Solution:

In short, none of these explanations of the low level of entropy in our universe are very satisfying for various reasons. And, none of them has any real empirical support. They all appear to be on the level of not so good just-so stories invented to try to explain away an otherwise intractable problem of the origin of low entropy. However, like other just-so stories and fairy tales, there is no basis in actual testable, potentially falsifiable, empirical evidence. It’s no better than arguing that, given an infinite number of “Big Bangs” or an infinite number of universes that anything is possible and therefore that anything actually happens – however statistically unlikely it may seem.

A Fine-Tuned Universe:

In a similar line, the origin of the very fined-tuned features of our universe require very low levels of entropy – at the very beginning of time for our universe. Also, if a universe is to support the existence of complex life, it’s fundamental constants have to be very very finely tuned indeed. Obviously, our universe supports complex life, like us, and physicists have discovered numerous fundamental constants of the universe that have to be very precisely balanced to that we could exist here. There are dozens and dozens of these features, which include:

In a similar line, the origin of the very fined-tuned features of our universe require very low levels of entropy – at the very beginning of time for our universe. Also, if a universe is to support the existence of complex life, it’s fundamental constants have to be very very finely tuned indeed. Obviously, our universe supports complex life, like us, and physicists have discovered numerous fundamental constants of the universe that have to be very precisely balanced to that we could exist here. There are dozens and dozens of these features, which include:

- the electron to proton ratio – standard deviation of 1 in 1037

- the 1-to-1 electron to proton ratio throughout the universe yields our electrically neutral universe

- the electron to proton mass ratio (1 to 1,836) perfect for forming molecules

- the electromagnetic and gravitational forces finely tuned for the stability of stars at 1 in 1040

- the gravitational and inertial mass equivalency

- the electromagnetic force constant perfect for holding electrons to nuclei

- the electromagnetic force in the right ratio to the nuclear force

- the strong force (which if changed by 1% would destroy all carbon, nitrogen, oxygen, and heavier elements)

- the cosmological constant controlling the expansion of the universe precise to 1 in 10120.

All of these parameters have to be very precisely balanced so that our universe could support complex life. For example, if the strength of gravity by just 1 part in 1040 (1 with 40 zeros after it – the equivalent of moving less than one inch on the universe-long ruler), there would be no life-sustaining planets. If the mass between electrons and protons were slightly different, building blocks for life such as DNA could not be formed. The collective precision of the known fundamental constants of the universe is thought to require a degree of precision of one part in over 10500.

All of these parameters have to be very precisely balanced so that our universe could support complex life. For example, if the strength of gravity by just 1 part in 1040 (1 with 40 zeros after it – the equivalent of moving less than one inch on the universe-long ruler), there would be no life-sustaining planets. If the mass between electrons and protons were slightly different, building blocks for life such as DNA could not be formed. The collective precision of the known fundamental constants of the universe is thought to require a degree of precision of one part in over 10500.

So, what is the origin of these extraordinarily fined-tuned features of our universe necessary for life? There is no inherent reason or requirement for these features to be fine-tuned like they are. And, statistically, it would be extremely unlikely that all of these fine-tuned features of the universe would just come about by random chance. So, what is the explanation? – outside of intelligent design via a God or God-like designer?

The most standard explanation coming from the atheist camp for such “anthropic” features of the universe is that it only stands to reason that our universe just so happened to have the proper fine tuning for life. Otherwise, we wouldn’t be here. Yet, this doesn’t explain the origin of the fine-tuned features of the universe. To illustrate, consider the following passage:

“Some have tried to counter with the WAP [weak anthropic principle], saying that we should not be surprised that we do not find features in the universe which are incompatible with our existence. This may be so, but it still does not explain the vast improbability of our existence. And it does not satisfy our desire to know why we exist. To demonstrate this, consider the following analogy:

Suppose you are dragged before a firing squad consisting of 100 marksmen. You hear the command to fire and the crashing roar of the rifles. You then realize you are still alive, and that not a single bullet found its mark. How are you to react to this rather unlikely event?

If we applied a sort of WAP [weak anthropic principle] to the firing squad scenario, we could state the following: ‘Of course you do not observe that you are dead, because if you were dead, you would not be able to observe that fact!’ However, this does not stop you from being amazed and surprised by the fact that you did survive against overwhelming odds. Moreover, you would try to deduce the reason for this unlikely event, which was too improbable to happen by chance. Surely, the best explanation is that there was some plan among the marksmen to miss you on purpose. In other words, you are probably alive for a very definite reason, not because of some random, unlikely, freak accident.

So we should conclude the same with the cosmos. It is natural for us to ask why we escaped the firing squad. Because it is so unlikely that this amazing universe with its precariously balanced constants could have come about by sheer accident, it is likely that there was some purpose in mind, before or during its creation. And the mind in question belongs to God.”

– William Lane Craig (based on an analogy he attributes to John Leslie; Link)

On this, many well-known physicists and astronomers tend to agree.

Sir Roger Penrose:

British mathematical physicist, Sir Roger Penrose, was among the first to voice the obvious philosophical conclusion:

“The extremely high level of fine-tuning astronomers and physicists discern powerfully suggests a purpose behind the universe.”

.

Sir Roger Penrose, in the movie A Brief History of Time (Burbank, CA: Paramount Pictures Inc., 1992).

Arno Penzias:

“Astronomy leads us to an unique event, a universe which was created out of nothing and delicately balanced to provide exactly the conditions required to support life. In the absence of an absurdly-improbable accident, the observations of modern science seem to suggest an underlying, one might say, supernatural plan.”

.

Arno Penzias (Nobel prize in physics), Margenau, H and R.A. Varghese, ed. 1992. Cosmos, Bios, and Theos. La Salle, IL, Open Court, p. 83.

Freeman J. Dyson:

“As we look out into the universe and identify the many accidents of physics and astronomy that have worked to our benefit, it almost seems as if the universe must in some sense have known that we were coming.”

.

Freeman Dyson (mathematical physicist), Scientific American, 224, 1971, p 50.

Sir Fredrick Hoyle:

Sir Fredrick Hoyle, famous British astronomer who early on (1951) argued that the coincidences were just that, coincidences. But, by 1953 he had evidently changed his mind and wrote:

Such properties seem to run through the fabric of the natural world like a thread of happy coincidences. But there are so many odd coincidences essential to life that some explanation seems required to account for them… A superintellect has monkeyed with physics, as well as with chemistry and biology.

.

Hoyle, Fred. “The Universe: Past and Present Reflections,” in Annual Review of Astronomy and Astrophysics, 20. (1982), p.16.

Paul Davies:

There are also the interesting back and forth arguments from Paul Davies, and English astrophysicist. Although he is currently a seemingly conflicted atheist (Link), he was once a kind of theist who argued strongly for what seems like a nearly overwhelming impression of design that most physicists come away with when studying the fine tuned features of the universe:

“The temptation to believe that the Universe is the product of some sort of design, a manifestation of subtle aesthetic and mathematical judgment, is overwhelming. The belief that there is “something behind it all” is one that I personally share with, I suspect, a majority of physicists. This rather diffuse feeling could, I suppose, be termed theism in its widest sense.” 1

“The force of gravity must be fine-tuned to allow the universe to expand at precisely the right rate. The fact that the force of gravity just happens to be the right number with stunning accuracy is surely one of the great mysteries of cosmology… The equations of physics have in them incredible simplicity, elegance and beauty. That in itself is sufficient to prove to me that there must be a God who is responsible for these laws and responsible for the universe.” 2

Davies, Paul C.W. (Physicist and Professor of Natural Philosophy, University of Adelaide at the time of writing]), 1) “The Christian perspective of a scientist,” Review of “The way the world is,” by John Polkinghorne, New Scientist, Vol. 98, No. 1354, pp.638-639, 2 June 1983, p.638 (Link, Link) and 2) Davies in his 1984 book Superforce.

Davies has since given up these theistic thoughts in favor of searching for some way to explain the fine-tuned features of the universe without appealing to anything outside of the universe – i.e., without appealing to either a God or the concept of multiple universes outside of our own. “I would like to try to find an explanation for the universe from entirely within it, without appealing to anything external… We need to try to find the explanation for the universe from within it, from what we see, and not multiply these unseen entities.” (Davies, 2014).

Albert Einstein:

“You may find it strange that I consider the comprehensibility of the world to the degree that we may speak of such comprehensibility as a miracle or an eternal mystery. Well, a priori one should expect a chaotic world, which cannot be in any way grasped through thought… The kind of order created, for example, by Newton’s theory of gravity is of quite a different kind. Even if the axioms of the theory are posited by a human being, the success of such an enterprise presupposes an order in the objective world of a high degree, which one has no a priori right to expect. That is the miracle which grows increasingly persuasive with the increasing development of knowledge.”

Albert Einstein in a letter to a friend (1956, Lettres a Maurice Solovine)

George Greenstein:

“As we survey all the evidence, the thought insistently arises that some supernatural agency – or, rather, Agency – must be involved. Is it possible that suddenly, without intending to, we have stumbled upon scientific proof of the existence of a Supreme Being? Was it God who stepped in and so providentially crafted the cosmos for our benefit?”

.

George Greenstein (American Astronomer). The Symbiotic, Universe: Life and Mind in the Cosmos. (New York: William Morrow, (1988), pp. 26-27

Frank Tipler:

“When I began my career as a cosmologist some twenty years ago, I was a convinced atheist. I never in my wildest dreams imagined that one day I would be writing a book purporting to show that the central claims of Judeo-Christian theology are in fact true, that these claims are straightforward deductions of the laws of physics as we now understand them. I have been forced into these conclusions by the inexorable logic of my own special branch of physics.”

Frank Tipler (Professor of Mathematical Physics), Tipler, F.J. 1994. The Physics Of Immortality. New York, Doubleday, Preface

Charles Hard Townes:

“This is a very special universe: it’s remarkable that it came out just this way. If the laws of physics weren’t just the way they are, we couldn’t be here at all….

Some scientists argue that, “Well, there’s an enormous number of universes and each one is a little different. This one just happened to turn out right.

Well, that’s a postulate, and it’s a pretty fantastic postulate. It assumes that there really are an enormous number of universes and that the laws could be different for each of them. The other possibility is that our was planned, and that is why it has come out so specially.”

Charles Hard Townes, winner of a Nobel Prize in Physics and a UC Berkeley professor

http://www.berkeley.edu/news/media/releases/2005/06/17_townes.shtml

Naturalistic Arguments for the Origin of the Fine-Tuned Universe:

It isn’t that atheistic physicists fail to recognize the extraordinary fine-tuned features of our universe necessary to support complex life. After all, Hawking in his 2010 book, The Grand Design, quotes a famed astronomer, Fred Hoyle where he wrote: “I do not believe that any scientist who examined the evidence would fail to draw the inference that the laws of nuclear physics have been deliberately designed with regard to the consequences they produce…” with Hawking adding, “At the time no one knew enough nuclear physics to understand the magnitude of the serendipity that resulted in these exact physical laws” (p. 159). What then is the answer to a universally recognized problem for the naturalistic perspective?

The Multiverse:

The main naturalistic argument forwarded to explain the fine-tuned features of the universe is the “multiverse” or “multiple universes” argument. String theorists such as Leonard Susskind, Andrei Linde, Stephen Hawking, and the like have argued that there an enormous number of universes, some 10500 at least – or even an infinite number of universes besides our own. Given so many universes, each programmed with different values for different fundamental constants (such as the value of the cosmological constant or dark energy), the seeming specialness of our universe becomes statistically likely and therefore not so unique or special among so many opinions – so many roles of the dice so to speak. Eventually, you’re bound to get lucky!

Fundamental Problem with the Multiverse:

The problem here, of course, is that such an argument can be used to explain absolutely everything, however statistically unlikely, as being the result of simply being in the “right universe”. And, of course, if your theory can explain absolutely everything, does it really explain anything? And, for this same reason, it also undermines the very basis of science itself since science is based on determining the predictive power of a particular hypothesis or theory relative to competing options. The multiverse concept removes the rational statistical basis for determining which among many competing options is the most likely explanation of the phenomenon at hand. At this point, all theoretical options become equally likely and science is no longer helpful in the search for truth.

Physicist Paul Steinhardt, who helped create the theory of inflation and the resulting implication of multiple universes, later came to reject it for this very reason – in no uncertain terms:

“Our universe has a simple, natural structure. The multiverse idea is baroque, unnatural, untestable and, in the end, dangerous to science and society.”

“From the very beginning, even as I was writing my first paper on inflation in 1982, I was concerned that the inflationary picture only works if you finely tune the constants that control the inflationary period. Andy Albrecht and I (and, independently, Andrei Linde) had just discovered the way of having an extended period of inflation end in a graceful exit to a universe filled with hot matter and radiation, the paradigm for all inflationary models since. But the exit came at a cost — fine-tuning. The whole point of inflation was to get rid of fine-tuning – to explain features of the original big bang model that must be fine-tuned to match observations. The fact that we had to introduce one fine-tuning to remove another was worrisome. This problem has never been resolved.

But my concerns really grew when I discovered that, due to quantum fluctuation effects, inflation is generically eternal and (as others soon emphasized) this would lead to a multiverse…

To me, the accidental universe idea is scientifically meaningless because it explains nothing and predicts nothing. Also, it misses the most salient fact we have learned about large-scale structure of the universe: its extraordinary simplicity when averaged over large scales…

Scientific ideas should be simple, explanatory, predictive. The inflationary multiverse as currently understood appears to have none of those properties.

These concerns and more, and the fact that we have made no progress in 30 years in addressing them, are what have made me skeptical about the inflationary picture.”

Even MIT Professor Alan Guth, a strong supporter of the theory of inflation (which he helped originate) and the multiverse, has acknowledged that it has some philosophically bizarre implications:

“In a single universe, cows born with two heads are rarer than cows born with one head,” he said. But in an infinitely branching multiverse, “there are an infinite number of one-headed cows and an infinite number of two-headed cows. What happens to the ratio?”

Alan Guth, In an interview with Natalie Wolchover and Peter Byrne (In a Multiverse, What Are the Odds?, November 3, 2014):

Why stop at two headed cows? After all, the multiverse hypothesis could make 50-headed cows or cows with wings and ruby slippers all unsurprising since a multiverse could explain all such things. Nothing, absolutely nothing, however crazy, would be surprising since nothing would be predictable – and scientific methodologies would be absolutely pointless.

Paul Davies presents a nice summary of this problem:

“As a matter of fact I believe that if somebody did a proper mathematical analysis, they would find that the complexity of the explanation of the multiverse – an infinite number of universes we don’t see – is the same as the explanation of traditional theology: an infinitely complex God outside the universe that we don’t see. They’re really the same thing, in different language…”

– Paul Davies, Closer to Truth, (August 23, 2014),

Except, of course, for the fact that a God would be able to think and reason and act based on compassion and love – while a multiverse would not be able to do this. A God would also be able to deliberately choose to make a predictable universe, based on elegant, even beautiful, mathematical concepts, symmetry, and simple formulas, open to scientific investigation and discovery (in order to reveal himself in a detectable manner) while a multiverse would not be able to do this.

Again, a multiverse could not be counted on to be so consistently predictable – for the very reason that nothing at all would be surprising from the perspective of a multiverse. However, given the hypothesis of a loving God who deliberately created the universe so that he could be discovered via a study of the universe and the universal laws of nature (as Sir Isaac Newton believed), the seemingly contrived mathematical elegance and beauty of the universe as well as its extreme fine tuning and very low levels of starting entropy start to make a whole lot more sense. After all, one would be hard pressed from God’s perspective to make a universe with any greater evidence of deliberate design built into it.

In any case, this problem with the multiverse concept is not lost on a number of other atheistic physicists, to include Lawrence Krauss himself. Krauss actually seems conflicted as he bemoans the situation:

“It’s not clear to me that it is a good thing; a theory that can explain anything, in a sense, one might say explains nothing,” he said. “If any universe you might come up with is consistent with your theory, is there a way to disprove your theory? Is this science?” (Link) – Lawrence Krauss, 2007

Yet, on the other hand, Krauss often begrudgingly writes and argues in favor of the multiverse concept:

You know, [the multiverse] is not a concept that I’m pretty fond of, but… we seemed to be driven there by our theories… You can get rid of stuff in space, the first kind of nothing. You can even get rid of space, but you still have the laws. Who created the laws?

Well, it turns out that we’ve been driven both from ideas from cosmology – from a theory called inflation or even string theory – that suggests there may be extra dimensions – to the possibility that our universe isn’t unique, and more over, that the laws of physics in our universe may just be accidental. They may have arisen spontaneously, and they don’t have to be the way they are. But if they were any different, we wouldn’t be here to ask the question. It’s called the entropic idea, and… it may be right.

It’s not an idea I find very attractive, but it may be right. And if it is, then it suggests that even the very laws themselves are not fundamental. They arose spontaneously in our universe, and they’re very different in other universes. And in some sense, if you wish, the multiverse plays the role of what you might call a prime mover or a god. It exists outside of our universe. And some people said, well, you know, physicists have just created this multiverse because they want to get rid of God.

Nothing could be further… from the truth. We’ve been driven to it by our discoveries in cosmology and particle physics. We’ve been driven to that possibility, which seems plausible and maybe even likely. And if as a corollary, it allows for our universe to be spontaneously created and even the laws created, well, that’s OK, but we weren’t driven there because of some philosophical prejudice against a creator. That didn’t even enter into the discussion.

– Lawrence Krauss, 2012 NPR interview (Link)

Philosophical Motivation:

But does Krauss really feel driven to this conclusion, which he knows undermines the very basis of science itself and which really doesn’t sit well with him for that reason, because it is the best interpretation of the empirical evidence in hand? – or because of some other philosophical motivation? Consider the following exchange regarding this very question:

Krauss:Maybe there is an eternally existing multiverse that we can’t observe or test scientifically? Maybe it has laws that we don’t know about which allow our universe to pop into being? Maybe this popping into being is uncaused? (alarmed) Who made God? Who made God?Religious people are stupid because they just assume brute facts. Religious people are against the progress of science, they don’t want to figure out how things work. But naturalists like me let the facts determine our beliefs. Philosophers are stupid, they know nothing!

Brierley:

Do you see any evidence of purpose in the universe?

Krauss:

Well maybe I would believe if the stars lined up to spell out a message from God

Brierley:

Actually no, that wouldn’t be evidence for God on your multiverse view. If there an infinite number of universes existing for an infinite amount of time, then anything can happen no matter how unlikely it is therefore, no evidence could convince you that God exists, since the unobservable, untestable, eternal multiverse can make anything it wants.

Krauss:

That’s a true statement, and very convenient for atheists who don’t want to be accountable to God, don’t you think?…

You talk about this god of love and everything else. But somehow if you don’t believe in him, you don’t get any of the benefits, so you have to believe. And then if you do anything wrong, you’re going to be judged for it. I don’t want to be judged by god; that’s the bottom line (podcast: 58:01).

________

Justin Brierley, ‘Unbelievable: A Universe From Nothing? Lawrence Krauss vs. Rodney Holder’

(Lawrence Krauss in 2012 debate with Rodney Holder – Course director at the Faraday Institute, Cambridge.) (Link, Link)

It seems then like there’s a little bit more going on here beyond a calm, cool, disinterested, even-handed search for empirical scientific truth. It seems like Krauss is fundamentally motivated by a passionate fear of Divine judgement. After all, Krauss himself does not refer to himself as a atheist, but rather an anti-theist. All of this seems rather strange coming from someone who honestly and truly sees no reasonable evidence for a God or God-like creative power behind anything in the natural world.

Artifacts of Design Ok – as Long as it’s Not God:

Artifacts of Design Ok – as Long as it’s Not God:

This is especially apparent given the agreement of Krauss that even if the stars were to suddenly line up and spell out a message from God, in English let’s say, such a message would not be recognized by Krauss as evidence of the Divine hand – because even a fantastic phenomenon like this could be explained by the multiverse hypothesis. At this point, it’s quite clear that Krauss is deliberately fooling himself in a rather desperate effort to deny the existence of God. After all, even Krauss would recognize many other artifacts  as intelligently designed – such a a highly symmetrical polished granite cube found on an alien planet by one of our rovers or the first 50 terms of the Fibonacci series embedded in a radio signal coming from an alien planet. He’d quickly and easily recognize phenomena like these as requiring the hand of some kind of alien intelligence – as would pretty much every other scientist in the world. So, what’s the difference? Well, on the one hand you have clear artifacts of intelligent design that do not require God-like power to explain. They could be explained by human-like levels of intelligence, but could not be explained by any other known mindless natural phenomena.

as intelligently designed – such a a highly symmetrical polished granite cube found on an alien planet by one of our rovers or the first 50 terms of the Fibonacci series embedded in a radio signal coming from an alien planet. He’d quickly and easily recognize phenomena like these as requiring the hand of some kind of alien intelligence – as would pretty much every other scientist in the world. So, what’s the difference? Well, on the one hand you have clear artifacts of intelligent design that do not require God-like power to explain. They could be explained by human-like levels of intelligence, but could not be explained by any other known mindless natural phenomena.

On the other hand, ironically, these things that would literally scream intelligent design to every scientists in the world (including Krauss, Hawking, and Dawkins) would also be explained by the multiverse concept – except the multiverse would not be invoked in such a situation by these very same scientists! Why not? Because the question of God isn’t on the line here! – and that’s the real bottom line. That is why the polished granite cubes and other artifacts pictured here are very clear artifacts of intelligent design regardless of where they happen to be found in our universe – while stars suddenly lining up and spelling out the phrase, “This is God. I’ve decided to send you a message!” would not be recognized as requiring intelligent design?! – because a multiverse could easily explain it?! You’ve got to be kidding me!

On the other hand, ironically, these things that would literally scream intelligent design to every scientists in the world (including Krauss, Hawking, and Dawkins) would also be explained by the multiverse concept – except the multiverse would not be invoked in such a situation by these very same scientists! Why not? Because the question of God isn’t on the line here! – and that’s the real bottom line. That is why the polished granite cubes and other artifacts pictured here are very clear artifacts of intelligent design regardless of where they happen to be found in our universe – while stars suddenly lining up and spelling out the phrase, “This is God. I’ve decided to send you a message!” would not be recognized as requiring intelligent design?! – because a multiverse could easily explain it?! You’ve got to be kidding me!

And Krauss has the temerity to call theologians and philosophers in general self-deluded and downright stupid?! Really? I dare say that someone arguing for a multiverse has very little room to talk!

Clearly then, it is the philosophy of atheism, not physics, that leads to a belief in the multiverse concept – which is, after all, an idea that undermines the very rational basis of scientific inquiry itself.

Abiogenesis and Darwinian Evolution:

History of Something from Nothing:

History of Something from Nothing:

The whole concept of something coming from nothing has long existed within the minds of scientists and great thinkers for hundreds and even thousands of years. For example, none other than the great genius Leonardo da Vinci himself made a number of drawings of perpetual motion machines that he hoped would make free energy. Of course, he was so consistently disappointed in this effort that he eventually concluded:

“Oh ye seekers after perpetual motion, how many vain chimeras have you pursued? Go and take your place with the alchemists.” – Leonardo da Vinci, 1494

And, before the discovery of microbes and before Pasteur came on the scene, it was widely thought that life arose from nothing – from dust or within rotting meat for instance. This idea was referred to as “spontaneous generation.” Even the scientists of the day thought that fleas could arise from inanimate matter such as dust, or that maggots could spontaneously arise from rotting meat. In fact, the doctrine of spontaneous generation was originally synthesized by Aristotle, who compiled and expanded the work of prior natural philosophers and the various ancient explanations of the appearance of organisms. And, this was the dominant view of philosophers and scientists alike for over two millennia. This view was only decisively dispelled in 1859 by the experiments of Louis Pasteur. John Desmond Bernal suggests that earlier theories such as spontaneous generation were based upon an explanation that life was continuously created as a result of chance events. And this was less than 200 years ago!

And, before the discovery of microbes and before Pasteur came on the scene, it was widely thought that life arose from nothing – from dust or within rotting meat for instance. This idea was referred to as “spontaneous generation.” Even the scientists of the day thought that fleas could arise from inanimate matter such as dust, or that maggots could spontaneously arise from rotting meat. In fact, the doctrine of spontaneous generation was originally synthesized by Aristotle, who compiled and expanded the work of prior natural philosophers and the various ancient explanations of the appearance of organisms. And, this was the dominant view of philosophers and scientists alike for over two millennia. This view was only decisively dispelled in 1859 by the experiments of Louis Pasteur. John Desmond Bernal suggests that earlier theories such as spontaneous generation were based upon an explanation that life was continuously created as a result of chance events. And this was less than 200 years ago!

Well, old ideas die hard, very hard it seems. After Darwin came in the scene and published On the Origin of Species, ironically in 1859 as well, the whole idea of something coming from nothing came roaring back to life just when it seemed like science had finally caught up with reality. And, we are still dealing with this idea of something coming from nothing as the primary goal for many modern scientists and philosophers of science. Consider, for example, the following excerpt from a 2014 discussion between Brian Greene and Richard Dawkins where Dawkins actually argues that the entire scientific enterprise should be built upon the idea of something coming from nothing:

So, there you have it. The Darwinian idea is basically that simple stuff can spontaneously give rise to more and more functionally complex stuff. Take this idea to its logical conclusion and one arrives at the notion that everything could reasonably come from “virtually nothing” or even “absolutely nothing” as Dawkins puts it. After all, if it can happen with living things, why couldn’t it also happen with everything else as well? It’s a very reasonable conclusion – even more reasonable in fact, in the light of 157+ years of Darwinism, than the popular ideas about abiogenesis before Pasteur came long. The only difference, of course, being that abiogenesis requires a whole lot more time than was previously imagined by those like Aristotle and the scientists during the 1800s who were made to look rather silly by Pasteur’s experiments. Time itself performs the miracle of making something from nothing.

“Time is in fact the hero of the plot … . Given so much time, the ‘impossible’ becomes possible, the possible probable, and the probable virtually certain. One has only to wait; time itself performs the miracles.”

.

– George Wald (Nobel Laureate), The origin of life, Scientific American 191(2):44–53, August 1954. p. 48

No Free Lunch:

The problem, of course, is that there is no such thing as a free lunch. Pasteur was right back in 1859 and he is still right today. Similar to the entropy problem of thermodynamics where the entropy of a close system always increases, never reverses, the natural tendency of functional information is toward degeneration. It doesn’t spontaneously increase over time without outside input of a superior quality. While it is true that the Earth is not a closed system with respect to thermodynamic entropy (i.e., there is a continuous flow of usable energy from the Sun), the problem is that in order to effectively use this flow of energy there has to be some sort of structure that can take advantage of this energy flow to produced “useful work”. Just because the energy flow is there doesn’t mean that “useful work” of any kind will spontaneously arise within a system. Without a certain degree of pre-established order within a system, no directed non- random work at all will be done by anything within a system. All that will happen in such a system (like a pot of dirt or a vial of amorphous sludge) if the energy flow increases is that things will get hotter. Atoms and molecules will move faster, but nothing much more interesting will happen.

random work at all will be done by anything within a system. All that will happen in such a system (like a pot of dirt or a vial of amorphous sludge) if the energy flow increases is that things will get hotter. Atoms and molecules will move faster, but nothing much more interesting will happen.

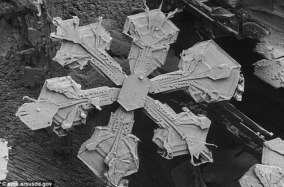

But what about the fact that atoms spontaneously and naturally self-assemble themselves into various kinds of molecules at various temperatures and in the presence of other atoms and molecules? What about the fact that complex structures can and will predictably form, quite naturally, from these molecules under various environmental conditions? For example, what about the fact that simple water molecules can for very complex snowflakes under the right conditions?

While this is true, the information for the formation of the snowflake was already pre-programmed into the molecular structure itself – a priori. The general structure of a snowflake is dependent upon the nature of the water molecule itself and how it interacts with other water molecules. So, there is a certain rather limited degree of “emergence” in form and even function that is possible, but the required information was already there pre-programmed into the system.

While this is true, the information for the formation of the snowflake was already pre-programmed into the molecular structure itself – a priori. The general structure of a snowflake is dependent upon the nature of the water molecule itself and how it interacts with other water molecules. So, there is a certain rather limited degree of “emergence” in form and even function that is possible, but the required information was already there pre-programmed into the system.

However, as the minimum requirement for unique building blocks in specific arrangements increases in order for higher and higher level functional systems to be produced, the very limited pre-programmed information within a given system becomes exponentially less and less able to spontaneously generate the minimum requirements of higher and higher level systems within a given span of time.

For example, the most simple living thing that is currently known was produced in the lab by Craig Venter and his team (March, 2016) – starting with the bacterial genome Mycoplasma mycoides. What they produced was a self-replicating bacterium that contains just 473 genes (Link) with an overall genome size of around 531,000 base pairs (bp). Outside of the lab, Mycoplasma genitalium has one of the smallest genomes of any free-living organism in the world, clocking in at a mere 525 genes (Link) with a total genome size of around 580,000 bp. For comparison, consider that humans have around 20,000 protein-coding genes and around 6 billion base pars.

For example, the most simple living thing that is currently known was produced in the lab by Craig Venter and his team (March, 2016) – starting with the bacterial genome Mycoplasma mycoides. What they produced was a self-replicating bacterium that contains just 473 genes (Link) with an overall genome size of around 531,000 base pairs (bp). Outside of the lab, Mycoplasma genitalium has one of the smallest genomes of any free-living organism in the world, clocking in at a mere 525 genes (Link) with a total genome size of around 580,000 bp. For comparison, consider that humans have around 20,000 protein-coding genes and around 6 billion base pars.

So, it seems like a genome comprised of just a few hundred protein-coding genes is relatively simple – right? However, imagine starting with a soup of all the individual atoms that would be required to assemble such a “simple” organism all in one warm little pot somewhere. What would have to happen to get these basic building blocks to self-assemble in just the right way so that a machine-like structure, like a living cell, would spontaneously emerge that itself then has the powers of self-replication? The minimum size and degree of specific arrangement of millions of atoms needed to form such a structure is truly mind boggling. The odds against it happening by random chance alone are astounding. Back in the early 1980s the astronomer Sir Frederick Hoyle argued that the odds of such a thing happening were around 1 chance in 1040,000. Since the number of atoms in the known universe is infinitesimally tiny by comparison (1080), he argued that life could not have evolved on Earth concluding that, “The notion that not only the biopolymer but the operating program of a living cell could be arrived at by chance in a primordial organic soup here on the Earth is evidently nonsense of a high order.” (Fred Hoyle, 1981)

So, it seems like a genome comprised of just a few hundred protein-coding genes is relatively simple – right? However, imagine starting with a soup of all the individual atoms that would be required to assemble such a “simple” organism all in one warm little pot somewhere. What would have to happen to get these basic building blocks to self-assemble in just the right way so that a machine-like structure, like a living cell, would spontaneously emerge that itself then has the powers of self-replication? The minimum size and degree of specific arrangement of millions of atoms needed to form such a structure is truly mind boggling. The odds against it happening by random chance alone are astounding. Back in the early 1980s the astronomer Sir Frederick Hoyle argued that the odds of such a thing happening were around 1 chance in 1040,000. Since the number of atoms in the known universe is infinitesimally tiny by comparison (1080), he argued that life could not have evolved on Earth concluding that, “The notion that not only the biopolymer but the operating program of a living cell could be arrived at by chance in a primordial organic soup here on the Earth is evidently nonsense of a high order.” (Fred Hoyle, 1981)

Mathematician Chandra Wickramasinghe, as a co-author with Hoyle on this topic, explained his shock at the realization that some God-like higher power seemed to be involved in various features of the natural world:

From the beginning of this book we have emphasized the enormous information content of even the simplest living systems. The information cannot in our view be generated by what are often called ‘natural’ processes, as for instance through meteorological and chemical processes. . . Information was also needed. We have argued that the requisite information came from an ‘intelligence’.

Fred Hoyle and Chandra Wickramsinghe, Evolution from Space (1981), p. 148, 150

“It is quite a shock. From my earliest training as a scientist I was very strongly brainwashed to believe that science cannot be consistent with any kind of deliberate creation. That notion has had to be very painfully shed. I am quite uncomfortable in the situation, the state of mind I now find myself in. But there is no logical way out of it. I now find myself driven to this position by logic. There is no other way in which we can understand the precise ordering of the chemicals of life except to invoke the creations on a cosmic scale. . . . We were hoping as scientists that there would be a way round our conclusion, but there isn’t.

Chandra Wickramasinghe, as quoted in “There Must Be A God,” Daily Express, Aug. 14, 1981 and Hoyle on Evolution, Nature, Nov. 12, 1981, p. 105

RNA World:

Yet, most biologists remained unconvinced. Based on what? Well, based on the idea that if simple things could evolve into more and more complex things once life got started that there most likely was a way were life itself spontaneously arose from even simpler non-living things. So, many theories of how abiogenesis might have happened have been forwarded over the years. Currently, the most favored hypothesis is known as the “RNA World” where the first level of complexity to evolve were self-replicating RNA molecules. This would break up the otherwise overwhelming problem of having to come up with millions of precisely organized atomic building blocks in one shot. After all, a self-replicating RNA molecule is relatively small in comparison – just a few dozen base pairs. And, some researchers claim to have demonstrated how RNA molecules can replicate themselves.

Yet, most biologists remained unconvinced. Based on what? Well, based on the idea that if simple things could evolve into more and more complex things once life got started that there most likely was a way were life itself spontaneously arose from even simpler non-living things. So, many theories of how abiogenesis might have happened have been forwarded over the years. Currently, the most favored hypothesis is known as the “RNA World” where the first level of complexity to evolve were self-replicating RNA molecules. This would break up the otherwise overwhelming problem of having to come up with millions of precisely organized atomic building blocks in one shot. After all, a self-replicating RNA molecule is relatively small in comparison – just a few dozen base pairs. And, some researchers claim to have demonstrated how RNA molecules can replicate themselves.

However, there are numerous problems with the concept of an RNA world as a reasonable steppingstone toward the spontaneous production of a living thing – starting with just the most basic building blocks themselves. First off, the production of single RNA nucleotides is somewhat difficult (though it has been observed on occasion – Link), not to mention producing them in any sort of putrefied concentration. Beyond this, the real problem is that RNA does not even replicate itself as most people imagine.

Self-replication:

The very best that has been experimentally demonstrated is an experiment by Professor Gerald Joyce (right), who conducted the research with Kellogg School graduate student Tracey Lincoln in 2009 (Link). They demonstrated an RNA molecule linking up two smaller carefully pre-designed and preformed strands of RNA so that the two strands, once combined, form the original RNA molecule. The original RNA molecule merely catalyzes the formation of a single chemical bond between two pre-formed RNA molecules – fusing two other pre-synthesized partial RNA chains. However, this original molecule cannot produce itself given only the existence of individual RNA nucleotide monomers. That just doesn’t happen.

The very best that has been experimentally demonstrated is an experiment by Professor Gerald Joyce (right), who conducted the research with Kellogg School graduate student Tracey Lincoln in 2009 (Link). They demonstrated an RNA molecule linking up two smaller carefully pre-designed and preformed strands of RNA so that the two strands, once combined, form the original RNA molecule. The original RNA molecule merely catalyzes the formation of a single chemical bond between two pre-formed RNA molecules – fusing two other pre-synthesized partial RNA chains. However, this original molecule cannot produce itself given only the existence of individual RNA nucleotide monomers. That just doesn’t happen.

As Stephen Meyer pointed out in 2009, “Lincoln and Joyce themselves intelligently arranged the matching base sequences in these RNA chains. They did the work of replication. They generated the functionally-specific information that made even this limited form of replication possible.” (Link)

Stephen Meyer:

Pre-biotic simulation experiments themselves confirm what we know from ordinary experience, namely, that intelligent design is the only known means by which functionally specified information arises.